What's New

- Adds official support for the iPhone 15 series of devices

- Fixes bug where the app would sometimes fail to request camera permissions

- Fixes issue where recorded MetaHuman Animator takes would sometimes fail to be imported to Unreal Engine due to an incorrect number of audio channels

- Improves support for devices that only support 30 FPS ARKit capture

App Description

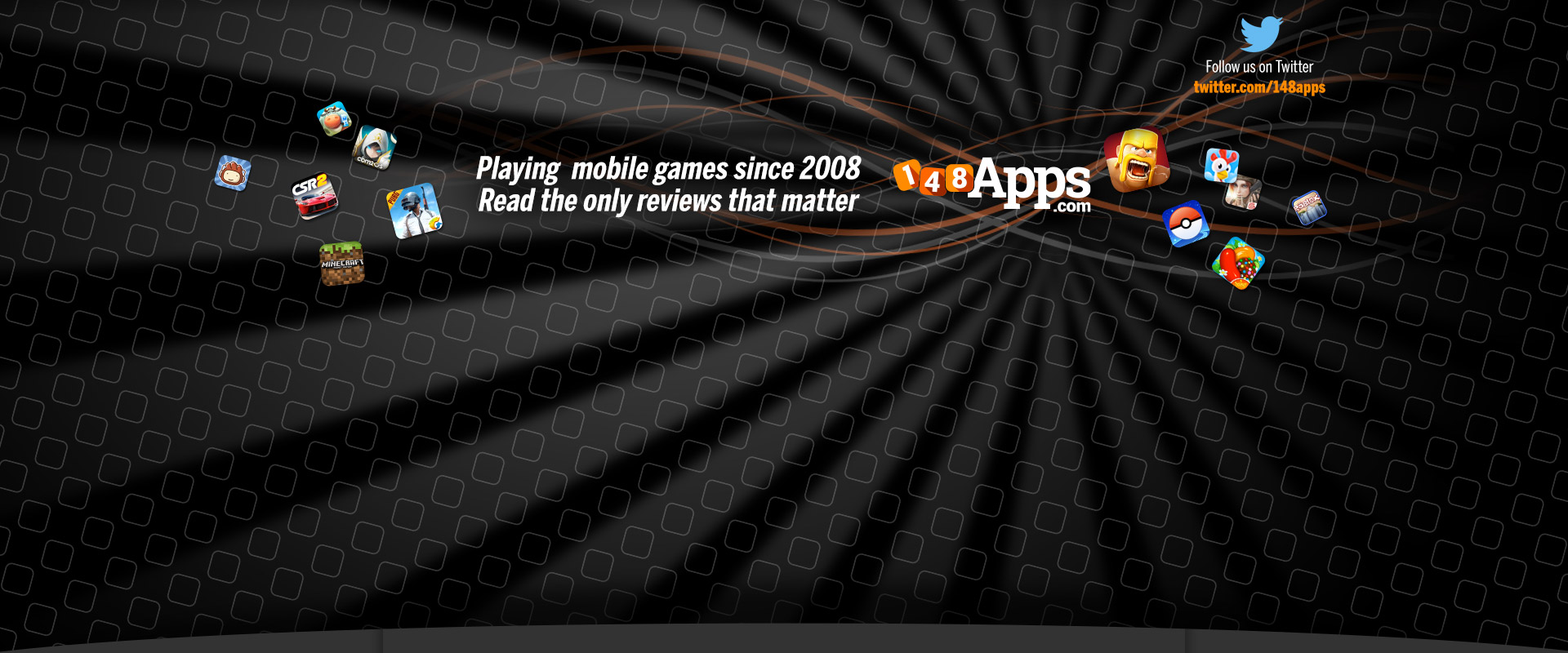

Live Link Face for effortless facial animation in Unreal Engine — Capture performances for MetaHuman Animator to achieve the highest fidelity results or stream facial animation in real time from your iPhone or iPad for live performances.

Capture facial performances for MetaHuman Animator:

- MetaHuman Animator uses Live Link Face to capture performances on iPhone then applies its own processing to create high-fidelity facial animation for MetaHumans.

- The Live Link Face iOS app captures raw video and depth data, which is ingested directly from your device into Unreal Engine for use with the MetaHuman plugin.

- Facial animation created with MetaHuman Animator can be applied to any MetaHuman character, in just a few clicks.

- This workflow requires an iPhone (12 or above) and a desktop PC running Windows 10/11, as well as the MetaHuman Plugin for Unreal Engine.

Real time animation for live performances:

- Stream out ARKit animation data live to an Unreal Engine instance via Live Link over a network.

- Visualize facial expressions in real time with live rendering in Unreal Engine.

- Drive a 3D preview mesh, optionally overlaid over the video reference on the phone.

- Record the raw ARKit animation data and front-facing video reference footage.

- Tune the capture data to the individual performer and improve facial animation quality with rest pose calibration.

Timecode support for multi-device synchronization:

- Select from the iPhone system clock, an NTP server, or use a Tentacle Sync to connect with a master clock on stage.

- Video reference is frame accurate with embedded timecode for editorial.

Control Live Link Face remotely with OSC or via the MetaHuman Plugin for Unreal Engine:

- Trigger recording externally so actors can focus on their performances.

- Capture slate names and take numbers consistently.

- Extract data for processing and storage.

Browse and manage the captured library of takes:

- Delete takes within Live Link Face, share via AirDrop.

- Transfer directly over network when using MetaHuman Animator.

- Play back the captured video on the phone.

App Changes

- October 23, 2020 Initial release

- September 15, 2023 New version 1.3.1

- March 26, 2024 New version 1.3.2